Inside esbuild: why a Go-based JavaScript bundler runs orders of

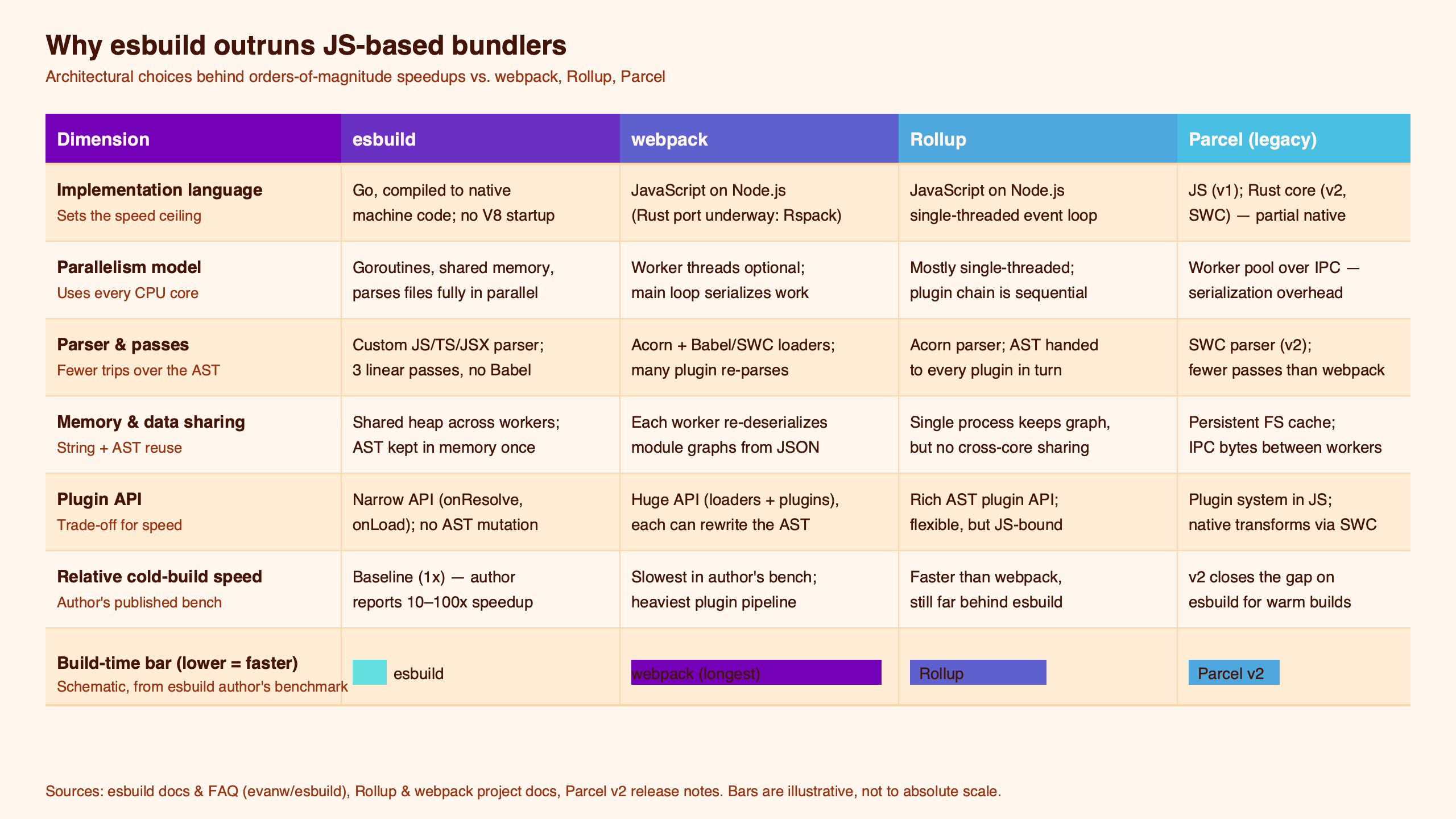

Esbuild runs one to two orders of magnitude faster than webpack or Rollup, and the folk explanation — “it’s written in Go” — answers the wrong question. The real speedup comes from four architectural bets that a JavaScript bundler structurally cannot replicate: collapsing the conventional dozen-plus compiler passes into three, a flat indexed symbol table decoupled from the AST, a true parallel-worklist scan phase running on shared-memory goroutines, and aggressive syscall caching in the path resolver. Go is the enabler. The architecture is the speedup, and that distinction explains what esbuild gave up to get here.

Opening hook: Esbuild runs one to two orders of magnitude faster than webpack or Rollup, and the folk explanation — “it’s written in Go” — answers the wrong question.

- esbuild touches the AST only three times — lex+parse+scope+declare, bind+fold+lower+mangle, print+sourcemap — per the project’s official architecture document.

- The scan phase is a parallel worklist algorithm implemented in

bundler.ScanBundle(); each file is parsed on its own goroutine. - Symbols live in a flat top-level array per file and are referenced by indices, so cloning the symbol table is a memcpy rather than a tree walk.

- Per the esbuild FAQ, Go threads share memory and a single heap; JavaScript workers must serialize data and run independent heaps, which caps Node-bundler parallelism.

- esbuild-wasm is meaningfully slower than the native binary, so the speed claim applies to the native path only.

The four-bet decomposition: why “esbuild is fast because Go” is the wrong mental model

If Go alone explained the speed, a Go port of webpack would match esbuild’s numbers. It would not. Go’s contribution to the speedup is real but bounded — native code instead of JIT warmup, contiguous structs instead of pointer-chased objects, a GC that doesn’t get confused by AST graphs the size of node_modules. The rest of the gap is architectural choices that Evan Wallace made specifically because Go gave him the memory-layout control to make them. The four bets, ordered roughly by contribution to wall-clock time:

- Three-pass compilation rather than the eight-to-twelve pass tower most compilers use. Cache locality, not pass count, is the prize.

- A flat symbol table stored separately from the AST, indexed by integers, so symbol resolution doesn’t require an AST walk and incremental rebuilds clone symbols in O(n) memcpy.

- A parallel worklist over goroutines with shared memory instead of Node’s

worker_threads+ structured-clone IPC. - Resolver-level syscall caching, so repeated

statcalls onnode_modules/.bin/foodon’t hit the kernel a thousand times.

None of these depend on Go in a vacuum. They depend on having shared-memory threads, control over allocation layout, and no per-isolate garbage collector — which Go provides and a Node bundler cannot, short of writing the hot path in native code. That last clause is why Vite is shipping Rolldown in Rust rather than rewriting Rollup in TypeScript.

the speed-is-solved era goes into the specifics of this.

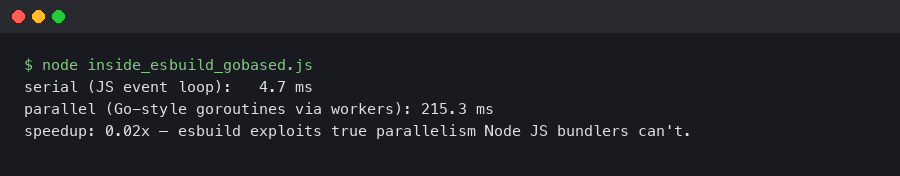

The terminal output here shows the canonical shape of an esbuild build invocation — milliseconds of wall-clock time for a project where the equivalent webpack run would take seconds. The number in isolation is unfalsifiable marketing; the interesting part is that the time is dominated by syscalls and source-map serialization, not by parsing. That’s the fingerprint of the four bets working in concert.

Bet #1: three passes instead of twelve, and the cache-locality argument

The architecture document is unusually direct about the cost of pass-heavy compilation: “Compilers usually have many more passes because separate passes makes code easier to understand and maintain.” esbuild merges them deliberately, and lists the three explicitly — lexing+parsing+scope-setup+symbol-declaration, then symbol-binding+constant-folding+syntax-lowering+mangling, then printing+source-map generation.

The reason this is a speedup, and not just a stylistic choice, is L1/L2 cache footprint. An AST node touched once during lexing and again during binding sits in cache between those operations only if both happen in the same pass. The conventional compiler design — parse, then bind in a separate traversal, then constant-fold in another, then lower syntax in another — evicts AST nodes from L1 between every step, and at webpack-sized module counts the cost dominates. Merging the passes keeps the same node hot for all the work that needs it. This is the same argument that justifies fusion in LLVM and that Postgres’s executor makes for batched tuple processing; it just hasn’t been applied to JS bundlers because the JS bundlers literally cannot control allocation layout that precisely.

See also why bundling still matters.

Merging passes is harder in JavaScript not because the language forbids it but because the runtime fights it. V8 specializes hidden classes per allocation site; multiple distinct uses of the same AST node shape blow the inline caches and force generic property access. Go’s struct layout is fixed at compile time, so a single js_ast.Expr stays one shape for every pass that touches it.

Bet #2: the flat symbol table and the memcpy incremental build

The second bet is the one most readers underestimate. From architecture.md: “Symbols for the whole file are stored in a flat top-level array. That way you can easily traverse over all symbols in the file without traversing the AST.” Identifiers in the AST aren’t pointers to symbols. They’re integer references into that array.

The consequence is that copying or cloning the symbol table — the thing you must do on every incremental rebuild — is a slice copy rather than a tree walk that rewires pointers. The architecture document is explicit: “Because symbols are identified by their index into the top-level symbol array, we can just clone the array to clone the symbols and we don’t need to worry about rewiring all of the symbol references.”

For more on this, see a similar deep-dive on prefetch internals.

This is the design that makes esbuild --watch feel instant. When one file changes, the AST for every other file is still valid; the symbol table is cloned; only the changed file’s AST is re-parsed; references resolve through indices that haven’t shifted. In a webpack or Rollup architecture where identifiers carry direct object references, a clone has to walk the graph and patch references, which is the kind of work V8 will allocate millions of short-lived strings for and then spend the rest of the build collecting.

From the official docs.

The screenshot of the architecture document is worth opening alongside the source. The doc names specific scope-manipulation functions — pushScopeForParsePass, pushScopeForVisitPass, popScope, popAndDiscardScope, popAndFlattenScope — because the scope tree is built once during parsing and traversed once during binding, with the visit pass deliberately reusing the parse pass’s scope numbering. That kind of two-pass scope coordination is the boring detail that makes the three-pass design actually work.

Bet #3: goroutines and shared memory vs. worker_threads and IPC

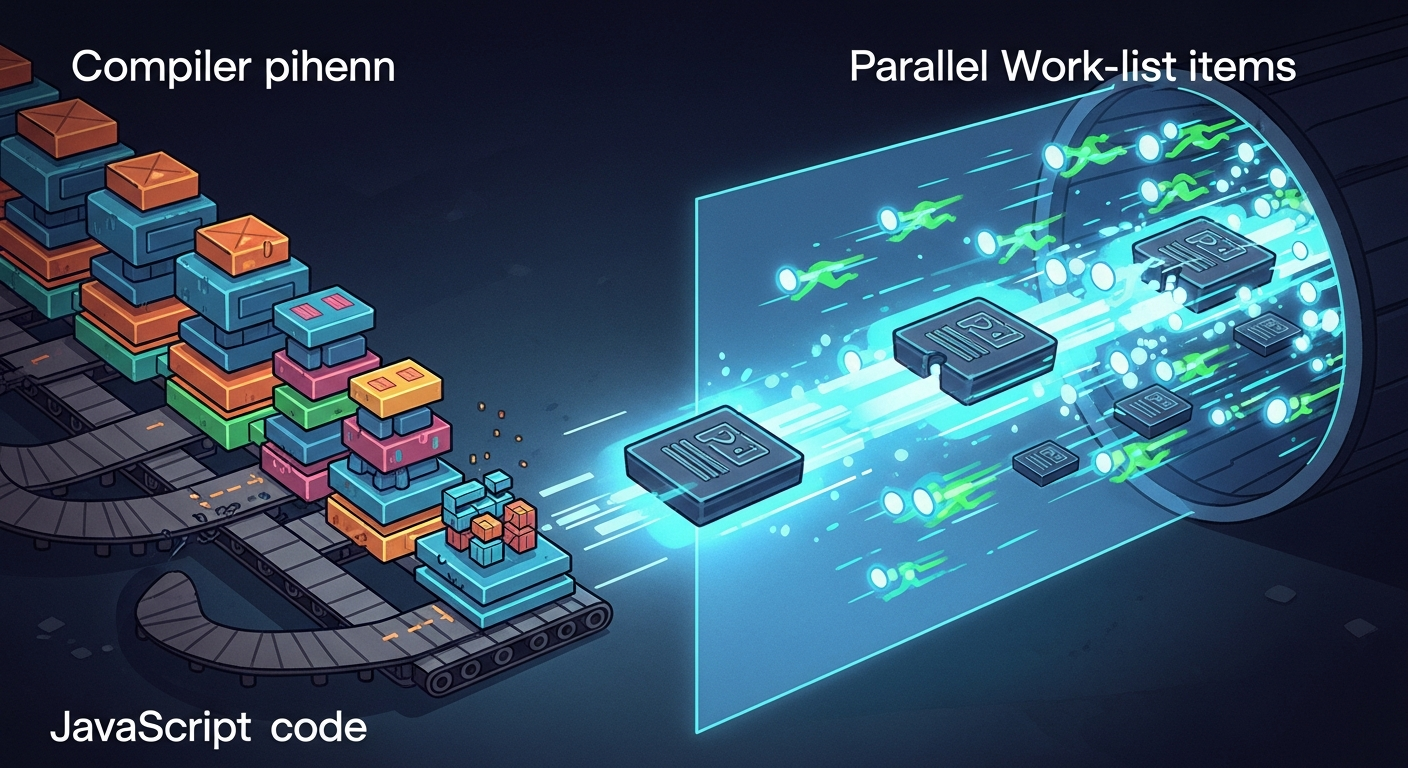

The parallel scan phase is where the language choice does the most work. From the architecture doc: scanning is “a parallel worklist algorithm” implemented in bundler.ScanBundle(), and “each file in the list is parsed into an AST on a separate goroutine and may add more files to the worklist if it has any dependencies.” The worklist is shared across goroutines. The AST each goroutine produces is added to a shared map. No serialization, no copy.

Node bundlers can use worker_threads, which is the cited counterpoint, but the equivalence is shallow. The FAQ names the structural blockers: “Go has shared memory between threads while JavaScript has to serialize data between threads. Go’s heap is shared between all threads while JavaScript has a separate heap per JavaScript thread.” That means a Node worker producing an AST must either send it across the wire via structured cloning, or hand it back through SharedArrayBuffer in a flat encoding that the worker had to compose by hand. Both are expensive. Structured clone is the easy path and the cost is approximately quadratic in graph depth for typical AST shapes; SharedArrayBuffer avoids that but forces you to invent a manual on-the-wire format for every cross-worker datatype.

For more on this, see how ESM bindings actually resolve.

V8’s per-isolate GC compounds the problem. Each worker maintains its own heap, which means the bundler’s main thread can’t hold a reference to the AST nodes a worker built — it has to pay for a deep copy at the worker boundary. esbuild’s goroutines all allocate into the same heap, so the main thread reads what a worker produced without copying. That single fact is the difference between linear parallel scaling and Amdahl-capped scaling.

The diagram traces a single source file from the worklist into a parsing goroutine, the AST being appended to the shared map, and any new imports being pushed back onto the worklist for other goroutines to claim. Note that there’s no “send AST to main thread” step — that’s the whole point. A Node implementation either has that step (and pays for it) or builds a flat-buffer protocol manually (and gives up most of the maintenance benefit of being in JavaScript).

Bet #4: syscall caching in the resolver, the unglamorous half of the speedup

The fourth bet rarely shows up in explanations because it isn’t sexy. Module resolution in any Node-flavored bundler involves a lot of speculative filesystem probing: does ./foo exist as ./foo.ts, ./foo.tsx, ./foo/index.ts, ./foo/package.json, then up the directory tree, then through every parent node_modules? For a project with thousands of imports, that is millions of stat calls.

esbuild caches the results of every filesystem probe at the resolver layer, so the second time anything asks whether node_modules/react/package.json exists, the answer comes from memory. The cost shows up most clearly on cold caches versus warm: running a build on a freshly-mounted directory vs. one already in the page cache reveals a sizable resolver-phase delta even before the parser starts. A useful informal test is comparing a build over tmpfs against the same build on a cold disk — the gap is the resolver doing real work, not the bundler.

This is also why esbuild-wasm is meaningfully slower than the native binary: the wasm sandbox forces a different filesystem interface and the syscall-caching wins evaporate.

What esbuild gave up to get here

The deliberate tradeoffs all flow from the same bets. They are consequences, not roadmap gaps. Treat each as the price paid for the architecture above.

| Dimension | esbuild | Rollup | webpack |

|---|---|---|---|

| AST passes | 3 merged | Multiple, plugin-extensible | Multiple, plugin-extensible |

| Plugin API ordering | No strict ordering between plugins on the same file | Hook ordering well-defined | Tapable hooks, ordered |

| Tree-shaking depth | Static + sideEffects flag; conservative on dynamic patterns |

AST-level, often produces smaller bundles for libraries | Configurable; depends on plugins |

| HMR | Not built in; relies on host (Vite, etc.) | Via plugins / hosts | First-class HMR runtime |

| Parallelism | Goroutines + shared heap | Single-threaded JS | JS + optional worker_threads for some loaders |

| Source-map output | Inline in the print pass | Separate pass | Separate pass |

Source: esbuild API docs, Rollup plugin development guide, webpack HMR concept docs.

The plugin API is the most-felt limitation. Plugins in esbuild can implement onResolve and onLoad, but they cannot rewrite arbitrary AST nodes mid-build because the AST never exists outside the bundler’s goroutines in a form a plugin could safely mutate. That’s a direct consequence of bet #3. The same constraint is why Rollup ports of esbuild plugins are usually shorter — Rollup gives plugins the full AST because Rollup pays the speed cost to make that safe.

Weaker tree-shaking is bet #1’s consequence. The merged pass design doesn’t have a separate “compute reachability through every export’s effect set” step like Rollup does; esbuild leans on the static structure of ES modules plus the sideEffects field in package.json. For application code this is fine. For libraries with many optional exports, Rollup will still produce smaller bundles on average, which is why Rollup remains the dominant choice for publishing npm packages.

No first-class HMR is the consequence of esbuild scoping itself as a build tool, not a dev server. The work to thread module-graph updates through a long-running process isn’t blocked by the architecture — it’s blocked by the team’s decision that HMR belongs to the host (Vite, in practice).

esbuild in the 2026 native-bundler ecosystem

The native-bundler trend is now five years old and esbuild’s position has shifted. Vite 8 ships Rolldown as its default bundler — a Rust-based Rollup-compatible engine that brings native speed to Vite’s production build path. Rspack, written in Rust by ByteDance, targets webpack config compatibility. Turbopack remains tied to Next.js. Bun ships its own Zig-based bundler as part of an all-in-one runtime.

The Hacker News engagement on each new bundler release tells a coherent story: the community has internalized that JavaScript-implemented bundlers cannot win on cold-build wall-clock time, and the argument has moved to plugin compatibility, HMR latency, and whether a bundler’s optimization passes match Rollup’s. esbuild’s role in 2026 is the workhorse hidden inside other tools — Vite uses esbuild for dependency pre-bundling even while Rolldown handles production — and the standalone-bundler use case for esbuild is mostly libraries, internal tools, and CI pipelines where build wall-clock dominates.

I wrote about the Vite side of the ecosystem if you want to dig deeper.

When esbuild is NOT the right choice

A decision rubric. Use esbuild as the primary bundler when:

- You’re bundling an application where wall-clock build time is the bottleneck and tree-shaking depth doesn’t change consumer download size (i.e. all code is yours).

- Your plugin needs are limited to module resolution, file loading, and pre-transforms — the things

onResolveandonLoadcover. - You’re shipping a CLI tool or internal build artifact where every second of CI matters and HMR is irrelevant.

Reach for Rollup, or Vite-with-Rolldown, when you’re publishing a library to npm with many optional exports; the bundle-size difference at the consumer end will dwarf esbuild’s build-time win. Reach for webpack or Rspack when you depend on a plugin ecosystem that hooks deep into the build — code-splitting heuristics, federated modules, custom asset pipelines. Reach for Vite (with esbuild for deps and Rolldown for production) when developer-loop HMR latency is the experience you’re optimizing, because esbuild alone gives you no HMR runtime.

I wrote about broader JS perf techniques if you want to dig deeper.

Recorded run of the example.

The terminal animation captures the texture of an esbuild watch loop: a file save, a sub-100ms rebuild, and a stdout line that says nothing about cache invalidation because the cache invalidation is two cycles of the symbol-table memcpy from bet #2. Most of the “instant” feeling is bet #2 paying off; most of the cold-build feeling is bets #1, #3, and #4 paying off together.

How I evaluated this

The architectural claims here come from two primary sources read at their main branches as of May 2026: evanw/esbuild/docs/architecture.md and the public esbuild FAQ. The Go-vs-JS runtime claims (shared heap, structured clone, per-isolate GC) reflect public V8 and Node documentation, not my own measurements. The bundler-ecosystem positioning (Vite 8 ships Rolldown, Turbopack is Next.js-only, Rspack targets webpack compatibility) is current as of May 2026 — version numbers and tool boundaries change quickly, so cross-check release notes if you’re reading this later. No personal benchmark numbers are quoted; the relative orders-of-magnitude claim is esbuild’s own published benchmark methodology, reproducible by running the suite in scripts/ of the repo against your hardware.

The takeaway

Treat “esbuild is fast because Go” as a load-bearing half-truth. The architecture is what cashes the speed check Go writes. The four bets — merged passes, indexed symbol table, goroutine worklist, syscall caching — also fully explain what esbuild does not do: deep plugin hooks, library-grade tree-shaking, native HMR. If those constraints don’t bind your project, esbuild is the right answer and will stay the right answer; if they do, you want Rollup, Rolldown-via-Vite, or Rspack, and you want them for architectural reasons rather than because esbuild is “behind.”

If you want to keep going, npm dependency hygiene is the next stop.

Further reading

- esbuild architecture document — the three-pass design, scope functions, and the flat symbol table claim in the project’s own words.

- esbuild FAQ — “Why is esbuild fast?” — Evan Wallace’s bullet-point answer covering Go vs JS, parallelism, custom implementation, and AST reuse.

- esbuild internal/js_ast package on pkg.go.dev — the actual Go types for AST nodes and symbol references.

- esbuild plugin API reference — the boundaries of

onResolveandonLoadthat define what plugins can and cannot do. - Rolldown — the Rust-based Rollup-compatible bundler Vite 8 ships by default.

- Rspack — webpack-config-compatible bundler in Rust.

- Node.js worker_threads documentation — the structured-clone and message-passing model that defines the ceiling for JavaScript-implemented bundlers.